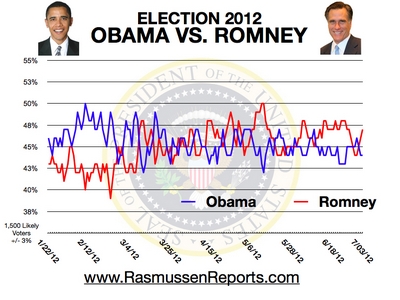

Rasmussen's reading of Obunga's "dead-cat" bounce over obungacare:

Rasmussen is the most accurate poll and is a poll of likely voters. My own take is that Obunga would have to be at 52% right now to have any sort of a shot at the thing given the forces lining up against him.

I see it as slightly better than an even shot that the dems throw Bork under the bus and run Stenny Hoyer for president in November.